_A talk given on the second day of the conference_ [Off the

Press](http://digitalpublishingtoolkit.org/22-23-may-2014/program/) _held at

WORM, Rotterdam, on May 23, 2014. Also available

in[PDF](/images/2/28/Barok_2014_Communing_Texts.pdf "Barok 2014 Communing

Texts.pdf")._

I am going to talk about publishing in the humanities, including scanning

culture, and its unrealised potentials online. For this I will treat the

internet not only as a platform for storage and distribution but also as a

medium with its own specific means for reading and writing, and consider the

relevance of plain text and its various rendering formats, such as HTML, XML,

markdown, wikitext and TeX.

One of the main reasons why books today are downloaded and bookmarked but

hardly read is the fact that they may contain something relevant but they

begin at the beginning and end at the end; or at least we are used to treat

them in this way. E-book readers and browsers are equipped with fulltext

search functionality but the search for "how does the internet change the way

we read" doesn't yield anything interesting but the diversion of attention.

Whilst there are dozens of books written on this issue. When being insistent,

one easily ends up with a folder with dozens of other books, stucked with how

to read them. There is a plethora of books online, yet there are indeed mostly

machines reading them.

It is surely tempting to celebrate or to despise the age of artificial

intelligence, flat ontology and narrowing down the differences between humans

and machines, and to write books as if only for machines or return to the

analogue, but we may as well look back and reconsider the beauty of simple

linear reading of the age of print, not for nostalgia but for what we can

learn from it.

This perspective implies treating texts in their context, and particularly in

the way they commute, how they are brought in relations with one another, into

a community, by the mere act of writing, through a technique that have

developed over time into what we have came to call _referencing_. While in the

early days referring to texts was practised simply as verbal description of a

referred writing, over millenia it evolved into a technique with standardised

practices and styles, and accordingly: it gained _precision_. This precision

is however nothing machinic, since referring to particular passages in other

texts instead of texts as wholes is an act of comradeship because it spares

the reader time when locating the passage. It also makes apparent that it is

through contexts that the web of printed books has been woven. But even though

referencing in its precision has been meant to be very concrete, particularly

the advent of the web made apparent that it is instead _virtual_. And for the

reader, laborous to follow. The web has shown and taught us that a reference

from one document to another can be plastic. To follow a reference from a

printed book the reader has to stand up, walk down the street to a library,

pick up the referred volume, flip through its pages until the referred one is

found and then follow the text until the passage most probably implied in the

text is identified, while on the web the reader, _ideally_ , merely moves her

finger a few milimeters. To click or tap; the difference between the long way

and the short way is obviously the hyperlink. Of course, in the absence of the

short way, even scholars are used to follow the reference the long way only as

an exception: there was established an unwritten rule to write for readers who

are familiar with literature in the respective field (what in turn reproduces

disciplinarity of the reader and writer), while in the case of unfamiliarity

with referred passage the reader inducts its content by interpreting its

interpretation of the writer. The beauty of reading across references was

never fully realised. But now our question is, can we be so certain that this

practice is still necessary today?

The web silently brought about a way to _implement_ the plasticity of this

pointing although it has not been realised as the legacy of referencing as we

know it from print. Today, when linking a text and having a particular passage

in mind, and even describing it in detail, the majority of links physically

point merely to the beginning of the text. Hyperlinks are linking documents as

wholes by default and the use of anchors in texts has been hardly thought of

as a _requirement_ to enable precise linking.

If we look at popular online journalism and its use of hyperlinks within the

text body we may claim that rarely someone can afford to read all those linked

articles, not even talking about hundreds of pages long reports and the like

and if something is wrong, it would get corrected via comments anyway. On the

internet, the writer is meant to be in more immediate feedback with the

reader. But not always readers are keen to comment and not always they are

allowed to. We may be easily driven to forget that quoting half of the

sentence is never quoting a full sentence, and if there ought to be the entire

quote, its source text in its whole length would need to be quoted. Think of

the quote _information wants to be free_ , which is rarely quoted with its

wider context taken into account. Even factoids, numbers, can be carbon-quoted

but if taken out of the context their meaning can be shaped significantly. The

reason for aversion to follow a reference may well be that we are usually

pointed to begin reading another text from its beginning.

While this is exactly where the practices of linking as on the web and

referencing as in scholarly work may benefit from one another. The question is

_how_ to bring them closer together.

An approach I am going to propose requires a conceptual leap to something we

have not been taught.

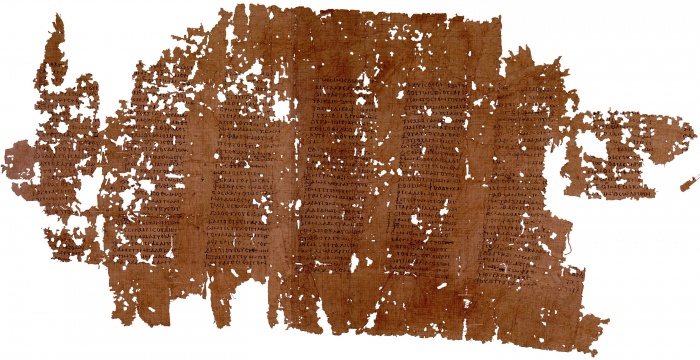

For centuries, the primary format of the text has been the page, a vessel, a

medium, a frame containing text embedded between straight, less or more

explicit, horizontal and vertical borders. Even before the material of the

page such as papyrus and paper appeared, the text was already contained in

lines and columns, a structure which we have learnt to perceive as a grid. The

idea of the grid allows us to view text as being structured in lines and

pages, that are in turn in hand if something is to be referred to. Pages are

counted as the distance from the beginning of the book, and lines as the

distance from the beginning of the page. It is not surprising because it is in

accord with inherent quality of its material medium -- a sheet of paper has a

shape which in turn shapes a body of a text. This tradition goes as far as to

the Ancient times and the bookroll in which we indeed find textual grids.

[](/File:Papyrus_of_Plato_Phaedrus.jpg)

A crucial difference between print and digital is that text files such as HTML

documents nor markdown documents nor database-driven texts did inherit this

quality. Their containers are simply not structured into pages, precisely

because of the nature of their materiality as media. Files are written on

memory drives in scattered chunks, beginning at point A and ending at point B

of a drive, continuing from C until D, and so on. Where does each of these

chunks start is ultimately independent from what it contains.

Forensic archaeologists would confirm that when a portion of a text survives,

in the case of ASCII documents it is not a page here and page there, or the

first half of the book, but textual blocks from completely arbitrary places of

the document.

This may sound unrelated to how we, humans, structure our writing in HTML

documents, emails, Office documents, even computer code, but it is a reminder

that we structure them for habitual (interfaces are rectangular) and cultural

(human-readability) reasons rather then for a technical necessity that would

stem from material properties of the medium. This distinction is apparent for

example in HTML, XML, wikitext and TeX documents with their content being both

stored on the physical drive and treated when rendered for reading interfaces

as single flow of text, and the same goes for other texts when treated with

automatic line-break setting turned off. Because line-breaks and spaces and

everything else is merely a number corresponding to a symbol in character set.

So how to address a section in this kind of document? An option offers itself

-- how computers do, or rather how we made them do it -- as a position of the

beginning of the section in the array, in one long line. It would mean to

treat the text document not in its grid-like format but as line, which merely

adapts to properties of its display when rendered. As it is nicely implied in

the animated logo of this event and as we know it from EPUBs for example.

In the case of 'reference-linking' we can refer to a passage by including the

information about its beginning and length determined by the character

position within the text (in analogy to _pp._ operator used for printed

publications) as well as the text version information (in printed texts served

by edition and date of publication). So what is common in printed text as the

page information is here replaced by the character position range and version.

Such a reference-link is more precise while addressing particular section of a

particular version of a document regardless of how it is rendered on an

interface.

It is a relatively simple idea and its implementation does not be seem to be

very hard, although I wonder why it has not been implemented already. I

discussed it with several people yesterday to find out there were indeed

already attempts in this direction. Adam Hyde pointed me to a proposal for

_fuzzy anchors_ presented on the blog of the Hypothes.is initiative last year,

which in order to overcome the need for versioning employs diff algorithms to

locate the referred section, although it is too complicated to be explained in

this setting.[1] Aaaarg has recently implemented in its PDF reader an option

to generate URLs for a particular point in the scanned document which itself

is a great improvement although it treats texts as images, thus being specific

to a particular scan of a book, and generated links are not public URLs.

Using the character position in references requires an agreement on how to

count. There are at least two options. One is to include all source code in

positioning, which means measuring the distance from the anchor such as the

beginning of the text, the beginning of the chapter, or the beginning of the

paragraph. The second option is to make a distinction between operators and

operands, and count only in operands. Here there are further options where to

make the line between them. We can consider as operands only characters with

phonetic properties -- letters, numbers and symbols, stripping the text from

operators that are there to shape sonic and visual rendering of the text such

as whitespaces, commas, periods, HTML and markdown and other tags so that we

are left with the body of the text to count in. This would mean to render

operators unreferrable and count as in _scriptio continua_.

_Scriptio continua_ is a very old example of the linear onedimensional

treatment of the text. Let's look again at the bookroll with Plato's writing.

Even though it is 'designed' into grids on a closer look it reveals the lack

of any other structural elements -- there are no spaces, commas, periods or

line-breaks, the text is merely one flow, one long line.

_Phaedrus_ was written in the fourth century BC (this copy comes from the

second century AD). Word and paragraph separators were reintroduced much

later, between the second and sixth century AD when rolls were gradually

transcribed into codices that were bound as pages and numbered (a dramatic

change in publishing comparable to digital changes today).[2]

'Reference-linking' has not been prominent in discussions about sharing books

online and I only came to realise its significance during my preparations for

this event. There is a tremendous amount of very old, recent and new texts

online but we haven't done much in opening them up to contextual reading. In

this there are publishers of all 'grounds' together.

We are equipped to treat the internet not only as repository and library but

to take into account its potentials of reading that have been hiding in front

of our very eyes. To expand the notion of hyperlink by taking into account

techniques of referencing and to expand the notion of referencing by realising

its plasticity which has always been imagined as if it is there. To mesh texts

with public URLs to enable entaglement of referencing and hyperlinks. Here,

open access gains its further relevance and importance.

Dušan Barok

_Written May 21-23, 2014, in Vienna and Rotterdam. Revised May 28, 2014._

Notes

1. ↑ Proposals for paragraph-based hyperlinking can be traced back to the work of Douglas Engelbart, and today there is a number of related ideas, some of which were implemented on a small scale: fuzzy anchoring, 1(http://hypothes.is/blog/fuzzy-anchoring/); purple numbers, 2(http://project.cim3.net/wiki/PMWX_White_Paper_2008); robust anchors, 3(http://github.com/hypothesis/h/wiki/robust-anchors); _Emphasis_ , 4(http://open.blogs.nytimes.com/2011/01/11/emphasis-update-and-source); and others 5(http://en.wikipedia.org/wiki/Fragment_identifier#Proposals). The dependence on structural elements such as paragraphs is one of their shortcoming making them not suitable for texts with longer paragraphs (e.g. Adorno's _Aesthetic Theory_ ), visual poetry or computer code; another is the requirement to store anchors along the text.

2. ↑ Works which happened not to be of interest at the time ceased to be copied and mostly disappeared. On the book roll and its gradual replacement by the codex see William A. Johnson, "The Ancient Book", in _The Oxford Handbook of Papyrology_ , ed. Roger S. Bagnall, Oxford, 2009, pp 256-281, 6(http://google.com/books?id=6GRcLuc124oC&pg=PA256).

Addendum (June 9)

Arie Altena wrote a [report from the

panel](http://digitalpublishingtoolkit.org/2014/05/off-the-press-report-day-

ii/) published on the website of Digital Publishing Toolkit initiative,

followed by another [summary of the

talk](http://digitalpublishingtoolkit.org/2014/05/dusan-barok-digital-imprint-

the-motion-of-publishing/) by Irina Enache.

The online repository Aaaaarg [has

introduced](http://twitter.com/aaaarg/status/474717492808413184) the

reference-link function in its document viewer, see [an

example](http://aaaaarg.fail/ref/60090008362c07ed5a312cda7d26ecb8#0.102).

Traditional libraries are increasingly putting their holdings online, if not

in competition with Google Books then in partnership, in order to keep pace

with the mass digitization of content. Yet it isn't only the big institutional

actors that are driving this process forward: small-scale, independent

initiatives based on open source principles offer interesting approaches to

re-defining the role and meaning of the library, writes Alessandro Ludovico.

A deep conflict is brewing silently in libraries around the globe. Traditional

librarians - skilled, efficient and acknowledged - are being threatened by

bosses, themselves trying to cope with substantial funding cuts, with the word

"digital", touted as a panacea for saving space and money. At the same time,

in other (less traditional) places, there is a massive digitization of books

underway aimed at establishing virtual libraries much bigger than any

conventional one. These phenomena are questioning the library as point of

reference and as public repository of knowledge. Not only is its bulky

physicality being questioned, but the core idea that, after the advent of

truly ubiquitous networks, we still need a central place to store, preserve,

index, lend and share knowledge.

Tablet-PC on hardcover book. Photo: Anton Kudelin. Source: Shutterstock

It is important not to forget that traditional libraries (public and private)

still guarantee the preservation of and access to a huge number of digitally-

unavailable texts, and that a book's material condition sometimes tells part

of the story, not to mention the experience of reading it in a library. Still,

it is evident that we are facing the biggest digitization ever attempted, in a

process comparable to what Napster meant for music in the early 2000s. But

this time there are many more "institutional" efforts running simultaneously,

so that we are constantly hearing announcements that new historical material

has been made accessible online by libraries and institutions of all sizes.

The biggest digitizers are Google Books (private) and Internet Archive (non-

profit). The former is officially aiming to create a privately owned,

"universal library", which in April 2013 claimed to contain 30 millions

digitized books.1 The latter is an effort to make a comparably huge public

library by using Creative Commons licenses and getting rid of Digital Rights

Management chains, and currently claims to hold almost 5 millions digitized

books.

These monumental efforts are struggling with one specific element: the time it

takes to create digital content by converting it from another medium. This

process, of course, creates accidents. Krissy Wilson's blog/artwork _The Art

of Google Books_2 explores daily the non-digital elements (accidental or not)

emerging in scanned pages, which can be purely material - such as scribbled

notes, parts of the scanning person's hand, dried flowers - or typographical

or linguistic, or deleted or missing parts, all of them precisely annotated.

This small selection of illustrations of how physicality causes technology to

fail may be self-reflective, but it shows a particular aspect of a larger

development. In fact, industrial scanning is only one side of the coin. The

other is the private and personal digitization and sharing of books.

On the basis of brilliant open source tools like the DIY Bookscanner,3 there

are various technical and conceptual efforts to building specialist digital

libraries. _Monoskop_4 is exemplary: its creator Dusan Barok has transformed

his impressive personal collection of media (about contemporary art, culture

and politics, with a special focus on eastern Europe) into a common resource,

freely downloadable and regularly updated. It is a remarkably inspired

selection that can be shared regardless of possible copyright restrictions.

_Monoskop_ is an extreme and excellent example of a personal digital library

made public. But any small or big collection can be easily shared. Calibre5 is

an open source software that enables one to efficiently manage a personal

library and to create temporary or stable autonomous zones in which entire

libraries can be shared among a few friends or entire communities.

Marcell Mars,6 a hacktivist and programmer, has worked intensively around this

subject. Together with Tomislav Medak and Vuk Cosic, he organized the HAIP

2012 festival in Ljubljana, where software developers worked collectively on a

complex interface for searching and downloading from major independent online

e-book collections, turning them into a sort of temporary commons. Mars'

observation that, "when everyone is a librarian, the library is everywhere,"

explains the infinite and recursive de-centralization of personal digital

collections and the role of the digital in granting much wider access to

published content.

This access, however, emphasizes the intrinsic fragility of the digital - its

complete dependence on electricity and networks, on the integrity of storage

media and on updated hard and software. Among the few artists to have

conceptually explored this fragility as it affects books is David Guez, whose

work _Humanpédia_7 can be defined as an extravagant type of "time-based art".

The work is clearly inspired by Ray Bradbury's _Fahrenheit 451_ , in which a

small secret community conspires against a total ban on books by memorizing

entire tomes, preserving and orally transmitting their contents. Guez applies

this strategy to Wikipedia, calling for people to memorize a Wikipedia

article, thereby implying that our brains can store information more reliably

than computers.

So what, in the end, will be the role of old-fashioned libraries?

Paradoxically enough, they could become the best place to learn how to

digitize books or how to print out and bind digitized books that have gone out

of print. But they must still be protected as a common good, where cultural

objects can be retrieved and enjoyed anytime in the future. A timely work in

this respect is La Société Anonyme's _The SKOR Codex_.8 The group (including

Dusan Barok, Danny van der Kleij, Aymeric Mansoux and Marloes de Valk) has

printed a book whose content (text, pictures and sounds) is binary encoded,

with enclosed visual instructions about how to decode it. A copy will be

indefinitely preserved at the Bibliothèque nationale de France by signed

agreement. This work is a time capsule, enclosing information meant to be

understood in the future. At any rate, we can rest assured that it will be

there (with its digital content), ready to be taken from the shelf, for many

years to come.